Most AI page tools grade their own homework. Lens doesn't.

The judge is not the writer

One AI suggests a change. A different AI grades it. They don't share notes. So the grader has no reason to be polite about a bad idea.

Three judges, not one

Three different customers vote on every change. The change only wins when most of them say it is better.

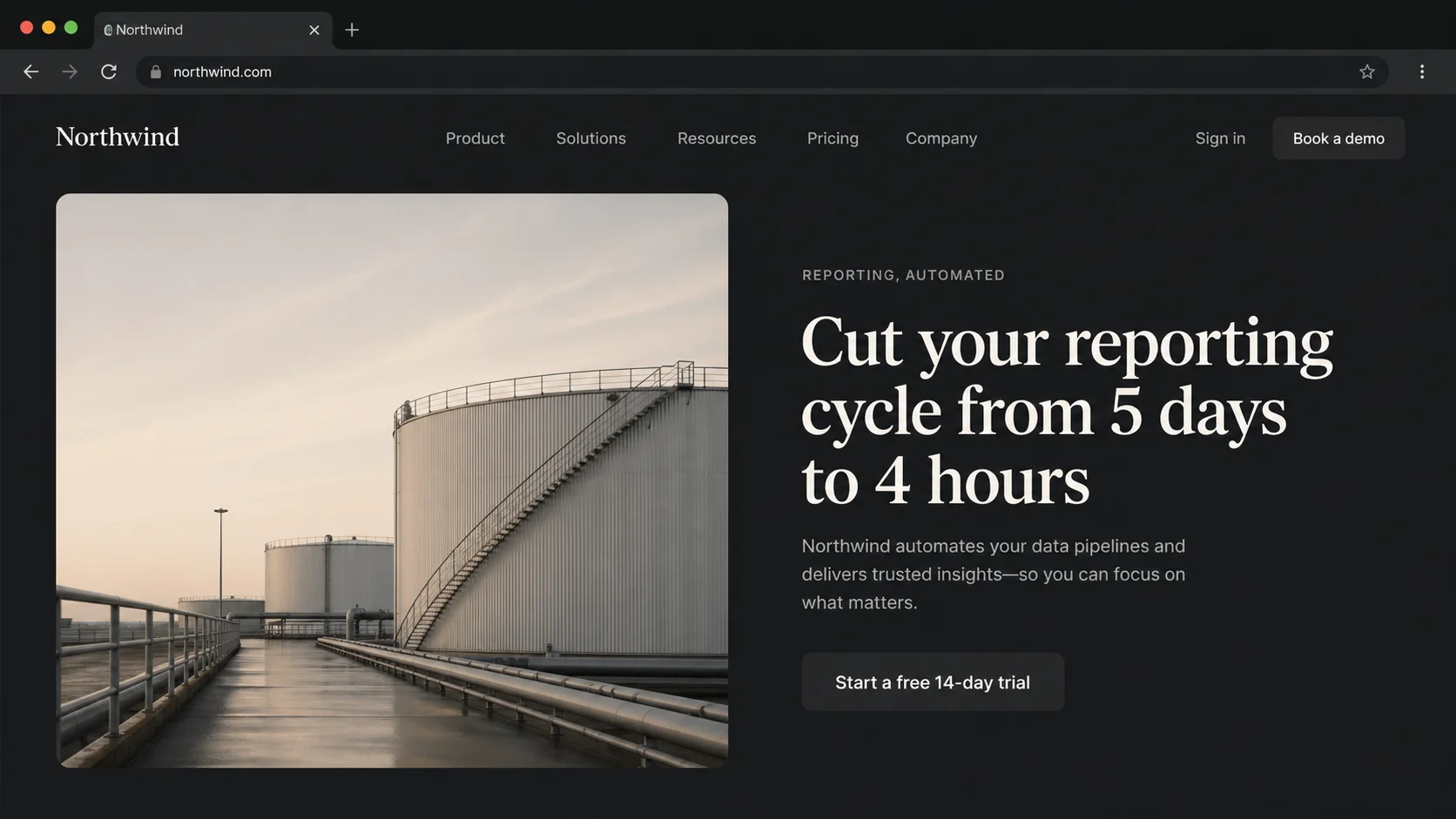

They look at pictures, not promises

Each customer votes by looking at a real picture of your page, the same way your visitors see it. Not at words an AI used to describe its own work.

Three customers. One verdict.

Each one reads your page through their own eyes. They vote on their own. They never see what the others said.

The Skeptic

Has never heard of you. Wants to leave. Asks 'why should I care?' about every line on the page.

The Target Customer

Built from your brand. Speaks your customer's language. Reads the page the way your real buyer would.

The Mobile User

Same person, on a phone. One hand. Walking. Out of patience in seconds.

The product is the diff.

One pass on a typical homepage hero. Three customers. One accepted change. Four things got better.

Illustrative example. Your run produces real screenshots, real per-persona rationale, and real per-axis scores from your live page.

How it works.

One AI suggests a change. Three customers vote. The page only changes when most say yes.

Look

We take pictures of your page on desktop and on a phone. All three customers grade it on seven things.

Try

A different AI picks one small thing to change (a headline, a button, a proof point) and tries it on a copy of your page.

Vote

All three see before and after. They don't know which is which. The change wins only when most say 'after is better.'

Keep or drop

Good changes stay. Bad ones go. We stop when there's nothing left to improve.

What every customer checks.

Seven things a real visitor cares about before they click. Each customer looks at all seven, before and after every change.

Clarity

Within five seconds, what is this page about?

Value

Why should this person pick you? Is the answer obvious?

Call to action

What should I do next? Is the button easy to spot and easy to click?

Trust

Logos, reviews, guarantees. Does this page look real?

Relevance

Does this match what this person was looking for?

Friction

Long forms. Slow loads. Phone problems. What gets in the way?

Desire

Setting reasons aside, does this make them want it?

“Lens proposed nine changes to our home page and we shipped four. The five it rejected would have hurt us. That’s the part I didn’t expect.”

- Mauro, HikmaAI

Questions people ask.

How is this different from an A/B testing tool?

A/B tools test two versions you already built, on real visitors, over weeks. Lens comes up with the versions for you, gets three different customers to vote in under an hour, then hands you a list ready to ship. You can still A/B test the winners. Lens just kills the guesswork upstream.

Can it break my page?

No. Lens works on a copy of your page. Your real site is never touched. Changes are kept small and safe by design. You see every change before you ship it, and you decide what goes live.

What does Lens actually change?

Small, focused changes to words, styling, and links. One thing at a time. A few changes per run. Big design moves, like rearranging your pricing table, are not what Lens does. That stays a designer's job.

Why three customers?

One judge has the same blind spots as the writer. Two get stuck. Three with majority agreement give you a verdict that is hard for any one bias to ruin. Most changes Lens proposes get rejected. That is the point.

Want Lens to score your home page?

Send us the URL. We'll run Lens against your live page and walk you through the report.